I promised, months ago, to write about

an example of a measurement cycle, including how I chose the questions, why they arose in the first place, and how students investigated them

I’ve tried all summer to write this blog post and failed, mostly because I’m discovering the weaknesses in my record-keeping. I’m going to answer as much of the question as I can, then make a few resolutions for improving my documentation.

Last year in DC Circuits (then in AC Circuits, and in Semiconductors 1 and 2), our days revolved around building and refining a shared model of how electricity works. There were two main ways we built the model:

- measuring things, then evaluating our measurements (aka “The Measurement Cycle”)

- researching things, then evaluating the research (aka “The Research Cycle”)

The measurement cycle

- In the process of evaluating some research or measurements, new questions come up. I track them on a mind-map (shown above).

- When I’m prepping our shop day, I pull out the list of questions and find the ones that are measurable using our lab equipment.

- I choose 4-6 questions. I’m ideally looking for questions that have obvious connections to what we’ve done before, that generate new questions, and that are significant in the electronics-technician world (mostly trouble-shooting and electrical sense-making).

- Things I think about: What are some typical ways of testing these questions? Do the students know how to use the equipment they will need? Is it important to have a single experimental design, or can I let each lab group design their own? Is there a lab in the lab-book with a good test circuit? Is there a skill on the skill sheet that will get completed in the course of this measurement? The answers to these questions will become my lesson plan.

- At the beginning of the shop period, I post the questions I expect them to answer and skills I expect them to demonstrate. We have a brief discussion about experimental design. Sometimes I propose a design, then take suggestions from the class about how to clarify it or improve it. Sometimes I ask the lab groups to tell me how they plan to test the question. Sometimes, I just ask for a “thumbs up/down/sideways” on their confidence that they can come up with a design and, if they’re confident, I turn them loose.

- If they will need a new tool to test the questions, we develop and document a “Hazard ID and Best Practice” for that tool. (More on this soon…)

- The students collect data — one data point for each question. When they finish (and/or, if they have questions), they put their names up on the white board.

- When a group finishes, they have to walk me through their data. I check their lab record against our “best practices for shop notebooks” (an evolving collection of standards generated by the class), and point out where they need to clarify/make changes. If their measurement process has demonstrated a skill that’s on the skill sheet, I sign it off. Then I take pictures of their lab notes, and they are done for the day. I run the pics through a document scanning app and generate a single PDF.

- On our next class day, everyone gets a copy of the PDF. I break them into 4-6 groups, one for every question they tested. No lab partners together in a group. Each group analyzes everyone’s data for a single question, makes a claim, and presents it to the class. The class helps the presenters reconcile any contradictions, then they vote on whether to accept the idea to the model. This process generates lots of new questions, some of which can’t be answered. They go on the list for next week.

- Repeat for 15 weeks.

Example from September

Example from September

Students were evaluating their measurements to figure out “What happens to resistance when you hook multiple wires together?” Here’s the whiteboard they presented to the class. Lots of good stuff going on here: they’re taking note of the effect meter settings have on measurement, noticing that wires have resistance (even though they’re called “conductors,” not “resistors”), and they’re able to realize that the meter measures the resistance of the leads, as well as what’s connected to the leads. In case you can’t read their claim, it says “Longer or more leads we connect and measure the resistance, more resistance we get.”

Questions students were curious about

Here’s where this inquiry-style stuff pays me dividends: I’m anticipating the path of future questions, and I’m thinking maybe it will be “what happens when you hook things up in parallel or in other arrangements.” I am so wrong. The next question is, “is it exactly proportional?” Whoa. I love that they’re attending to the fact that things aren’t always proportional.

The next question surprises me even more. It’s “If this works for test leads, does it work for light bulbs/hookup wires/resistors too?”

I was kind of stunned by that. At this point, the model includes the idea that resistance varies with length, cross-sectional area, and material. This should lead us to expect different amounts of resistance from different materials, but not entirely different patterns of variance. Especially between test leads and hookup wires!

On one hand, I’m afraid this means they think that light bulbs and hookup wire somehow obey fundamentally different physical laws than test leads. Their willingness to imagine the universe as disconnected and patternless offends against my sense of symmetry, I guess. I get over myself and realize that it’s awesome that they want their own sense of symmetry to be based on their own observations. So, I add it to the list of questions for the following week. I mentally curse the lab books of the world, which would have hustled the students past this moment without giving them a chance to notice their own uncertainty, which would then end up buried in their heads, a loose brick in the foundation of their knowledge, practically impossible to excavate.

How they investigated

The following week, we investigate “Is the change in resistance exactly proportional” and “do other materials do the same thing.” In our beginning-of-class strategy session, I tease out what exactly they want to know. Are they asking if the change in resistance is exactly proportional to … length? number of leads? What? They want to know about length, so that’s settled. There are lots of other questions on the docket that day, including

- Is there resistance in a terminal block?

- Can electrons get left behind in something and stay there? [I think this is a much more interesting way to ask it than the textbook-standard “Is current the same everywhere in a series circuit”]

- If electrons can get stuck, would it be a noticeable amount? Is that why a light dims when you turn it off? Are they getting lost or are they slowed down?

- Can more electrons pass through a terminal block than a wire?

- If you connect light bulbs end-to-end, we expect the total resistance to go up, but what will happen to the current? Is it the same as with one bulb? Will there be less electrons in bulb 3 than in bulb 1? Will the bulbs be dimmer as you go along?

They’re confident using the tools and materials, so I let them design their experiments however they want.

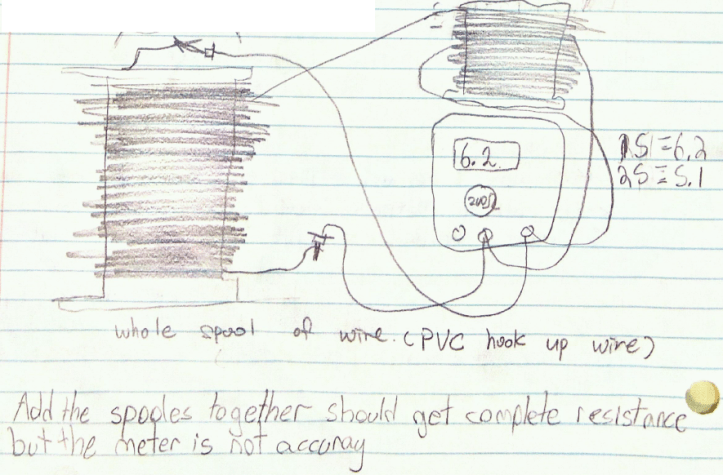

Some very cool experiments resulted. To check if total resistance in series was additive, one group used light bulbs, one used resistors, and (my favourite) one group removed two entire spools of hookup wire from the storage cabinet and measured the resistance of the spools separately, then connected in series (as shown).

Some very cool experiments resulted. To check if total resistance in series was additive, one group used light bulbs, one used resistors, and (my favourite) one group removed two entire spools of hookup wire from the storage cabinet and measured the resistance of the spools separately, then connected in series (as shown).

This generated some odd data and some experimental design difficulties: there was no easy way to figure out the length of the spool. They could still tell that their data was odd, though, because the spools appeared visually to be about the same length, so whatever that length was, they should have roughly twice as much of it (short pause to appreciate that the students, not the teacher or textbook, made this judgement call about abstraction). Or, at least, the resistance should be more than that of one spool.

And that’s not what happened. If you look closely at the diagram, each spool appears to have 3 ends… Note that the sentence at the bottom shows that they distrust their meter. However, they did not fudge the data, despite not believing it was right. I believe that this is my reward for not grading lab books. Wait — not grading lab books is my reward for not grading lab books!

In the following class, this experiment generated no additions to the model but a mother lode of perplexity. It also resulted in demands for a standardized way of recording diagrams [Oh OK, since you insist…], and questions about what happens when you hook up components “side-by-side” instead of “one after the other.” And we’re off to the races again.

Speaking of standards for record-keeping…

It was really difficult to find the info for this blog post, because my record-keeping system last year was not designed to answer the question “how did questions arise.” It was intended to answer the question, “Oh God, what the heck am I doing?”

Some changes that will help:

- Using Jason Buell’s framework to keep whiteboards organized in Claim/Evidence/Reasoning style

- In the PDF record of students’ measurements, including a shot of the front-of-class whiteboard where I recorded the agenda

- Giving meaningful names to those PDF files. “20110928 lab data” is not cutting it.

- During class discussion, recording new questions next to the idea we were discussing when the question came up. Similarly, on the mind-map, attaching new questions to the ideas/discussions that generated them..

- Keeping electronic records of the analysis whiteboards (step 9 above), not just the raw data. Maybe distribute these to students as well, to have a record for their notes when we inevitably have to revisit old ideas and re-evaluate them in light of new evidence.

HAve a great year!