How Diodes Work

Questioning Authority and Building Models: New Semester Letter to Students

White Board Speed Dating

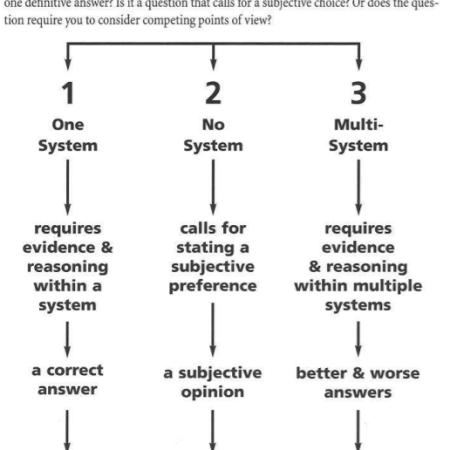

Assessment Q&A

Assessment Practices and Transforming Our Relationships to Power

Hacking the Marshmallow Challenge

Exploring Student-Designed Assessment using Standards Based-Grading

Exploring Student-Designed Curriculum