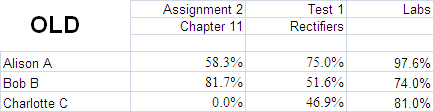

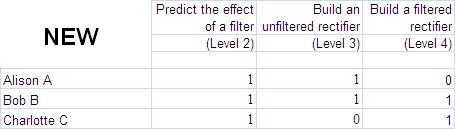

In January 2011, I overhauled my grading system, and it’s still evolving.

In the old system, I tracked 10 assignments, 15 labs, and 4 tests.

In the new system, I track about the same number of items, but they are “skills” that students must master — not tasks that they must complete. I still design learning activities, and some of them are quizzes, but there are some important differences.

For one thing, there is no grade for the whole quiz. When I correct quizzes, each question relates to a skill, and I mark whether it demonstrates the required level of mastery, or not. I don’t add them up at the end. The student gets a complete/incomplete grade for each “skill” that was tested.

Why?

I don’t need to know the average of their skills. I need to know what to help them improve before I send them out to industry to pop breakers or start fires. So I track each skill separately. In the new gradebook, above, I can see right away who needs help with what. Even better, the students can see for themselves what they need to work on.

How do you get a grade out of that?

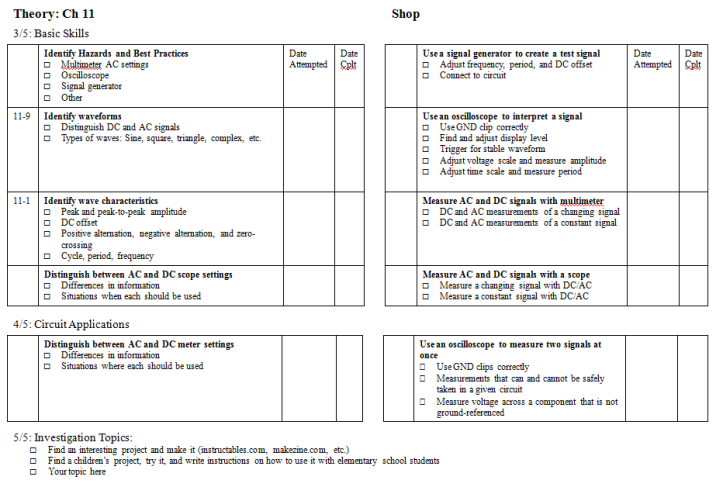

I hand out a skill-tracking sheet at the beginning of each unit (see example below). The skills are organized by complexity. “Required skills” are skills that students absolutely must master in order to pass. “Applications” are either less crucial or require combining skills. “Investigations” mean the student has to propose their own learning objective related to the course topic, then demonstrate it. The technical name for this is “conjunctive” grading, and I stole it from Kelly O’Shea.

If a student completes all the Required skills, they have a score of 3/5 — in other words, 60% (our pass mark). If they complete either the Applications I list, or an Investigation they choose, they have a score of 4/5. If they do both, they have 5/5 for that unit.

The catch is this: You can complete Applications and Investigations anytime; but you can’t get credit for them until you’ve finished the Required Skills. If a student has most of the Required skills, all of the Applications, and they have done an Investigation, they might still have a 2.5/5 for that unit (not yet passing). A student can’t get away with missing the fundamentals. But non-mandatory work isn’t “wasted;” in the example above, when the student finishes the Required skills, their score will jump to 5/5. At the end of the semester, if all the Required skills are complete, I average the unit scores and convert to a percentage (the College requires a number grade, but since it has zero effect on anyone’s life ever again, I don’t worry about it too much).

But. If there are outstanding Required skills, what I type into the school’s database is “Incomplete.” They can then negotiate with Student Services about a supplemental assessment or a learning contract. Guess what’s on the supp? You got it — the outstanding Required skills.

That being said, I do my very best to undermine the “points-seeking economy.” The goal is to master skills, not get a number written on a piece of paper. One tool I use is “Critical Thinking Points.”

What are Critical Thinking Points?

Critical thinking points are available anytime a student figures out what caused someone to think something different than what they think. Including, what caused their own past self to think something they no longer think. Not “I was tired,” “I was overthinking it,” etc. But actual insight into what makes an alternative answer compelling. I often phrase it as “why might a reasonable person think that.” Besides helping us practice the valuable trait of intellectual humility, it also helps us understand, “what question is this the correct answer to?”

So now, when someone gives a well-thought-out “wrong” answer, or sees something good in an answer they disagree with, I add 1 to our class tally of critical thinking points. At the end of the semester, I divide them by the number of students and add them straight onto everyone’s grade, assuming they completed the requirements to pass. I’m giving these things out by the handful. I hope everybody gets 100. Maybe the students will start to realize how ridiculous points are; maybe they won’t. They and I still have a record of which skills they’ve mastered; and it’s still impossible to pass if they’re not safe or not employable. Since their grades are utterly immaterial to absolutely anything, it just doesn’t matter. And it makes all of us feel better.

In the meantime, the effect in class has been borderline magical. They are falling over themselves exposing their mistakes and the logic behind them, and then thanking and congratulating each other for doing it — since it’s a collective fund, every contribution benefits everybody. I’m loving it.

What Constitutes a Skill?

These 10 skills (below) make up the AC Measurement unit. A skill is either complete or it isn’t. There are no partial marks. To get credit for a skill, it must be mastered at the level defined on the rubric.

On the left are “theory” skills that can be assessed in any format. On the right are practical skills that require building or fixing something. Click through to expand.

Reassessment, or, Have I lost my mind?

If this sounds punitive and like it might be impossible to pass, I assure you that grades have gone up, and so has the retention rate. The magic is in this one change: students can reassess any skill until the end of the semester.

This makes much less work for me.

I did a few things to simplify the system:

- I stopped agonizing over partial marks, and gave every skill either a “complete” (1) or “incomplete” (0) grade

I don’t feel bad about not giving credit for a partially right answer, because it’s not the only chance the student gets. When they’ve practised enough to feel confident that they’re ready, they ask for a reassessment. They can propose the content of the assessment, as long as it demonstrates the required skill. They can also choose the format. If they want, I’ll print off a quiz. Or they might send me a video of themselves explaining something, or write a paper. Or build a model. Or write a song. If they convince me that they have the skill, I update their grade to track their current level of understanding. If not, they can try again next week. This makes grading really speedy.

- Students analyze their mistakes, not me

The corollary to that is that, in order to apply for reassessment, the students have to find and fix their own mistakes. I often write narrative feedback, especially about what they’re doing well. But I used to spend hours poring over their work with a fine-toothed comb, analyzing where they went wrong and why. Guess whose skills that improved? Not the students’. Now they use the fine-toothed comb themselves. I grade less, they learn more.

- I also stopped assigning homework

We spend time practicing in class, and I teach students how to create their own problem sets if they need more. I provide a “first run” assessment of the week’s skills every Tuesday. Any skills that you master, you probably don’t need homework on. Any that you don’t master, you are responsible for creating appropriate practice to get ready to reassess, and demonstrating it to me before you apply. In other words, students create their own homework, based on their needs. I don’t have to create it, differentiate it, or grade it.

What about deadlines, and responsibility?

Each student can define their own deadline. Again, I coach them on this, and provide iterative feedback through the semester; trying to do everything in the last week will not work. We set a “default” due date as a class for each item. But any student can notify me of a change to their deadline until 4pm on the business day before it’s due. They can also extend it as many times as they need — again, by notifying me at least one business day ahead. If they miss the due date they themselves set, the skill receives a grade of zero, and it still has to be completed in order to pass! While this may seem draconian, it’s not.

Almost everybody misses a due date near the beginning of the year. I make sure they understand that they must complete the work, which now will not contribute to their grade. I also make sure they know that our goal is learning, not perfection. At the end of the year, I look at the overall pattern. The vast majority of the time, students have experimented with and learned to improve their skills at time management, just like everything else. Assuming this is the case, I reserve the right to change those zeroes back to ones.

How do you reassess students’ skills?

During our scheduled time in the shop, they show me what they can do, and I chat with them about it. If I’m convinced, I sign it off on their skill sheet. Or they can submit evidence of mastery in some other format — I teach them how to make screencasts, phone videos, and other media for exactly this purpose.

Another option is to ask me to make them a fresh quiz. When students select this option, they have to let me know by Tuesday at the latest by passing in their skills folder (sort of a portfolio) with evidence of what they did to increase their skill (practise problems, build a circuit, or any other demonstration that they have improved). On Tuesday night I write back to them acknowledging the request, and asking for clarification or extra evidence if necessary. Wednesday, I make up quizzes with all the questions people have requested. On Thursday afternoons, the students show up and choose buffet-style from the quizzes they need. As students finish, I review their answers with them so they know what they got right and/or why they had trouble.

What about synthesis?

If I need the students to use two skills together, I list three skills on the skill sheet: one for each individual skill and one for the combination (as in the example above). Also, Investigations are different from the others. You reach that level by doing something that shows your understanding of the links between topics, and requires you to choose your own problem-solving strategy. For example, last week we studied voltage doublers. Three students teamed up and made a multistage circuit that multiplied the voltage by 15, getting an output voltage over 100V. I didn’t teach them how to do that, but I did teach them the skills they needed to figure it out. It was up to them to take initiative, experiment, and decide how to check whether they had succeeded.

Is this the same as “outcomes-based” grading?

No. Skills-based grading is a simple shift from an aggregate grade (“Chapter 2 Test”) to individual grades for each skill contained in Chapter 2. It is agnostic about the authenticity or flexibility of the assessment. Although, in my case, the increase in data caused huge ripple effects in everything I do. For details, see… the entire blog.

Does it work?

Looks like it, but new updates keep coming in. Stay tuned.

This is great, thanks for so much detail. Do you have a chance to have meta-discussions with your students to find if they think they’re learning more? If not, be careful, because I’ve found that once I opened that door it’s hard to get anything else done! I’m implementing SBG for the first time this semester in a junior-level Theoretical Mechanics class and we spend parts of almost every class period talking about how their learning can improve within the system. Of course I’m getting great feedback, and the students clearly feel as though they’re really a part of the process, but I think I need to find some balance.

*laugh* I know what you mean about conversation-sprawl. Strangely, we seem to be spending less class-time talking about learning than we did last semester. Maybe it’s because last semester I was more frustrated, or the students had more questions because targets were less clear? This semester, we’re also spending a lot less time on conversations like “is this on the test” and “why do I need to learn this” and “are you going to give a formula sheet.” Now that I think of it, I don’t remember the last time those came up. I’m mostly noticing the things I’m not hearing (people freaking out with test anxiety, or being angry that I expect them to grapple with confusion).

I am having lots of informal conversations with students, especially during shop (when most of the assessment are verbal) and the impressions seem positive, but that tends to be with one or two students at a time. I’m on the lookout for a good evaluation process, as a matter of fact, if anyone has any suggestions…