For the last two months, my students have been developing their own model of atoms and electricity. They judge new ideas according to their

- clarity

- precision

- internal consistency

- seamless chain of cause and effect

- connection to what’s already in the model

- sources’ credibility

- and predictive power.

Each week, their questions determine what we will explore in the lab. The rest of the time, they read each others’ lab journals, develop standards for everything from measurement techniques to seating arrangements, and talk about what it means to know something, how to distinguish knowledge from belief, and what it means for an idea to be “true.”

It’s WEIRD. My mouth has been hanging open since the second day. I’ve been taking time to record what’s happening, but my brain has been 100% occupied trying to digest it. Hence the radio silence; I haven’t had any energy to synthesize things into a readable form in months. Here’s a bit of an overview that I hope will get me back on track.

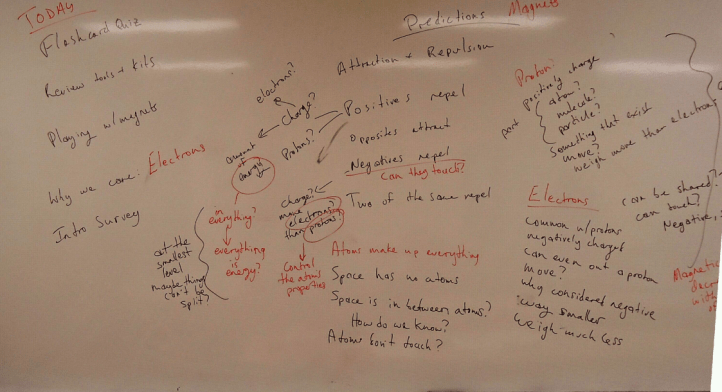

It Turns Out You Can’t Google Everything

On the very first day, we played with magnets and talked about our ideas. Then we discussed how those ideas might relate to atoms. I practiced some new techniques I wanted to try: writing down everything they said (misconceptions and all), and encouraging clarity (mostly by restricting my comments to “What do you mean by that exactly?” and “How do you know?”). That yielded questions like

- What’s the difference between charge and energy?

- Is charge just a way of measuring the amount of energy in something? Is there energy in everything?

- At the smallest level, maybe there are things that can’t be split?

- If atoms make up everything, but there are no atoms in space, what is space made of?

- Is there space between atoms? Is it the same as “outer space”? How do we know?

- Do electrons have to be considered negative, or is it just a way to distinguish from protons?

- Can protons move from atom to atom?

The following Friday, we were in the lab. I could have had them hook up a circuit and try to take some measurements, but I didn’t, mostly because that wasn’t what they were asking about.

I grouped their questions into topics (protons, electrons, atoms, charge) and asked each student to find three pieces of information for each topic, from any source they wanted. Each student sat at a computer, and I brought in a stack of related books from the library. I handed out modified Cornell paper (I generate it here) and instructed them to write what the source said on the right-hand side, what they thought (questions, inferences, comments, diagrams, anything at all) on the left side, and a summary at the bottom.

It turned out that Wikipedia did not yield the answer to questions like “Is there a thin layer of air between all atoms.” (Real student question.) In order to answer this question, they had to use multiple sources, evaluate their credibility, and use inferences to link the information together. Result: we started talking less about “information” and more about “well-reasoned” “good judgement.”

Credits:

The criteria we’re using in class to evaluate reasoning (our own, each other’s, and that of our sources) is an amalgam inspired by Brian Frank and the Foundation for Critical Thinking. See especially the comments on Brian’s post on assessing students’ lab notebooks and this summary of the FCT’s taxonomy of thinking.

Learning is messy and fun and evolving–and I love to see it in action. Thanks for sharing!

I like this list: clarity, precision, internal consistency, seamless cause/effect, connections to present model, source’s credibility, and predictive power.

A lot of my colleagues use 3C’s: Clarity, coherence, causality, where clarity I think contains a sense of specificity (or precision), coherence contains both internal (to ideas) and external consistency (with evidence). The seamless part of cause/effect is really important-i’ve noticed one colleague now use the word ‘gapless story’. Adding in credibility is nice, because it allows us to expand our evaluation to outside sources.

What I like about “connections to model” is this notion of new scientific ideas (whether refinements are departures) are always situated within our current understanding. Even new radical theories must be proposed in light of old theories. There’s a part of me (for that reason) that wants to think of predictive power as ‘driving power’… not necessarily that it predicts or even predicts correctly, but that good ideas drive communities forward with new questions, new investigations, etc. In that sense, good ideas (even wrong ones) connect us to the past and drive us to future understandings.

Thanks Brian — I picked up the “seamless” (or “gapless”) part from you. It’s been very helpful. I find that I flounder when I have to explain what it means for a conclusion to “follow” from its premises (I couldn’t explain it in any terms that weren’t just as confusing to the students), and my students had a really hard time evaluating an inference to determine whether it was valid. The idea of “seamlessness” helps students make sense of what we’re doing. So far I’ve mostly been using it with chains of cause and effect, partly because that’s where they have the most trouble.

I agree with you that a great criterion for evaluating ideas is their “driving power” — it sounds similar to what you’ve called the “generative” potential of questions. I don’t think I will ask my students to evaluate that just yet — mostly because I’m still not good at it myself…

Another thought: where do the “3 C’s” come from? Is this something that has spread by word of mouth or is there a resource I could check out?

Here are some resources (but perhaps not all) from which they derive:

David Hammer et al, “Identifying inquiry and conceptualizing students’ abilities” (2005) … and Sandoval & Reiser: “Explanation-driven inquiry…” (2004) and Russ et al, “Recognizing elements of mechanistic reasoning in scientific inquiry” (2008)

Wow. what a great class

[…] Baby Steps to Inquiry […]

[…] class data and made proposals to our shared model (we’re co-creating an inquiry-based emergent curriculum), I had the teams present to the class. Results varied widely. Sometimes it was hard for them […]